Wednesday, August 31, 2011

Controlling by cooperating

The genome contains protein coding sequences, but these are only a small percent of our DNA. Another part of DNA consists of generally very short sequences in the vicinity of a protein-coding gene, that are used to control when (in which cells) the nearby gene is to be transcribed into messenger RNA and then translated into protein. These regulatory DNA sequences work by being bound (grabbed physically) by proteins called transcription factors (TFs). A TF is a protein whose physical and chemical properties make it bind (find and stick to) specific regulatory elements (REs) like (to make one up) CCTGCA.

The idea has been that such regulatory elements are bound by a specific TF and if that TF is itself being produced by the cell, it will grab the RE (stick to CCTGCA) and cause the gene to be transcribed. If the TF isn't being produced by the cell, the CCTGCA remains naked and the gene inactive.

We're oversimplifying greatly, but this is the general idea. But what if there is more than one TF that recognizes the same RE? Then, our hyperDarwinian friends might presume that the two TFs compete to bind the sequence, with some sort of consequence for gene expression. This would then set up an opportunity for natural selection to choose a winner, for example, so that the loser TF would lose its function.

But instead, as shown schematically in the figure, TF A, on the left, binds to a specific RE (the blue box along the black DNA line, which helps open up the wrapped-up DNA (called chromatin--black line of DNA wrapped around some packaging proteins represented by the pink ball) near a particular gene, that event allows another complex of proteins (the remodeling complex in the figure) to modify the DNA so that TF A gives way to enable TF B to get access to the same RE sequence. The nearby gene is expressed.

This is just one experimental example of a particular laboratory setting, rather than an analysis of such activities generally, so we don't know how pervasive such cooperation is. The idea of intricate cooperation is to us only an additional instance of such a phenomenon, which we believe is far more pervasive and important to understand, in terms of biology, than competition. Competition may, of course, help establish cooperative interactions if they are useful--and that would be the standard Darwinian theory. But every day powerful molecular technologies are finding new examples of the kinds of extensive cooperative interaction that occurs between the passive sequence of DNA and the very dynamic activities of organisms. Without such cooperation, we wouldn't be here to write these posts....and you wouldn't be here to read them!

Tuesday, August 30, 2011

Professors on the take? Yes, but that's not the point!

These noble representatives of our enlightened populace are holding forth, as if they knew anything at all about the subject, against human-caused climate change and against evolution. They misrepresent the evidence, which means they listen to advisers, since they are politicians rather than scientists, and can't be expected to do more than respect the evidence and ask responsible and knowledgeable people to explain it to them.

They use incomplete aspects of the evidence as if it meant that the overall evidence is wrong. They don't question their own willingness to accept very incomplete evidence when it comes to subjects like economics and religion, because in all these cases they color their interpretations to serve their personal agendas. As we've said in an earlier post, it is perfectly legitimate to say that we'll just ignore climate change because it let's us live our current lives, and let future generations deal with whatever their lives have to face. And it's perfectly legitimate for them to say that they're unwilling to think about evolution, because prayer gives them a sense of comfort and order in the world, and that's enough for them.

But something else they do is quite wrong, even then. As part of their purported argument against the evidence, Krugman notes that they argue that the scientists are in a sense in a cabal to perpetuate bad evidence because they're on the take--that is, they are at the public funding trough and they want to stay there, indeed, to increase what's in the trough.

Of course scientists are, in this sense, on the take. If we have to live on grants, we need grants. If we believe in science or have to live on the public's belief in science, we have to tout our work and have to lobby for more funding.

We criticize genetics all the time for this kind of vested interest--in universities, in the media, and in corporate entities like Pharma. It's something to be criticized for, but in a sense what should be changed (if possible) is the way things are funded.

The fact that we lobby for our interests does not, in itself, make our claims for our science wrong. And the accusation that it does is what's wrong with the Republican anti-science movement. Scientists will lobby for funds for correctly understood research as well as for incorrectly understood research. If the Big R's of American politics want to oppose the science itself, and they can find legitimate grounds for doing that, it would be fair game. But the accusation of vested interests, even if true, is an irrelevant red herring--and is being used by demagogues, not people sincerely concerned with leading national policy appropriate to the facts of nature--or deciding to ignore the facts of nature.

Monday, August 29, 2011

A small circle of friends

Everything we're learning about the relationship between genes and the biological traits they affect makes the number of contributing effects larger, more complex, and more specific to the species, population, individual, or even cell than simple models of genetics generally specify.

The degree and subtlety of this is shown by a recent paper by TR Mercer et al., current (Aug, 2011) issue of the prominent journal Cell. The authors show that even the tiny mitochondrial genome, a DNA ring molecule contained, one copy each, in the hundreds to thousands of mitochondria within a cell, are used in complex ways.

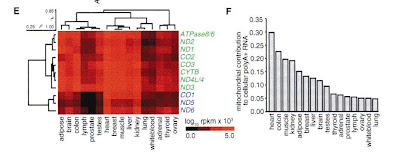

The human mtDNA is 16569 nucleotides long, and contains DNA sequence that codes for 37 genes of various types. The investigators identified mRNA copies of these genes in different cells from 16 different human tissues, to determine which mtDNA genes were being used in each tissue and and at what relative level (how many copies of each of the coded proteins were being made).

This figure shows on the left in red-shade, the presence and concentration of 11 tested genes (rows labeled on the right of the figure), in the 16 tested tissues (columns, labeled below the figure). You can see the patchwork of variable intensity and variable tissue expression. The rows and columns have been sorted to cluster like patterns. On the left is a tree-like diagram of tissue-expression similarities, with the rows of overall greatest similarity being shown near each other. Similarly, the cluster diagram shown atop the figure is of the overall relative similarity of expression levels. Clearly different genes are expressed in partly correlated ways in different tissues.

The part of the figure on the right shows, for the same tissues, the relative total contribution of genes expressed by the mtDNA compared to all genes expressed in the same cells. The highest fraction, 30%, the tall bar on the left, represents high relative mtDNA gene levels in heart tissues, while these genes have the least relative expression, only 5%, in lung tissue, the short bar on the right.

The size and gene content of the human mtDNA has long been known, but not the relative levels of the usage of these genes. This paper is mainly a presentation of the results (and an online data base one can use to get actual data), rather than an explanation of the pattern. But if mtDNA is largely related to energy usage, one might expect higher activity in more energy demanding tissues than in more passive types of cells. Actually, this is not what the data show: the brain, an energy sink if there ever was one, is dead center in its mtDNA usage compared to the other tissues. This seems an important fact, or perhaps a warning about the results, and the authors seem rather blithely to skip over this fact in their brief description of the relative usage data.

The point here, however, is not to quibble about such details, because if there are mistakes or inaccuracies and the like, future work will figure them out. Our point is that mtDNA, the simplest part of our genomes in a sense, add yet another level of complexity to the connections between human phenotypes and their underlying genotypes. No new principles of nature are revealed, but there is another tack in the lid of the simple-causation box.

The mitochondrion arrived 1-2 billion years ago as an invader of organisms' cells, and has become a vital and permanent part of the genome, even if not directly in chromosomes in the nucleus (mitochondria are in the cytoplasm, not the nucleus). Their coded proteins interact with proteins coded by genes in the nucleus, to make the cell work, and in particular to providing the cell its chemical energy. These functions are very ancient and stable. Nonetheless, this little circle of friends, of interacting genes, is used even among the tissues of a given individual, in many different ways.

The correlations among expression levels shows an example of cooperation that has been established by evolution, that varies even among the tissues in our own bodies. If all you care about is the net function--how lungs allow certain levels of activity, or how fast hearts beat under various circumstances, then these details simply show one of the levels of underlying complexity in attaining that result, and will be of little use to you. But it is of use to know that this complexity is involved because complexity also implies some redundancy or buffering.

These investigators did not study a diversity of conditions to see how stable the expression values they obtained are, nor how similar to other species. That kind of information may be hard to obtain, but is for the future, and we are very likely to find that even within a cell there will be substantial variation.

But at least this paper beings to reveal the revelry that takes place even within this little circle of friends....

Friday, August 26, 2011

Some ants are more equal than others

|

| Individually coded ants (photo from JEB). |

Coordination of biological processes in groups that lack the ability to communicate globally among themselves, such as insect societies, has long been thought to be due to self-organization. That there is division of labor in these societies has long been known, but it has been thought that within strata, every individual must know the same rules, and be equally able to follow them. And the general assumption has been that they are basically chemical automata in this respect--all molecular recognition rather than open cognition. Indeed, human exceptionalism is so widespread in science that one often sees it viewed as surprising that any other species can think (much less has 'mind'). So, this experiment, while quite basic, is interesting.

It's starting to look as though ants not only learn, but recognize that some of their peers may have more knowledge than others. That is, some may play more influential roles in key behaviors, including decision-making. Stroeymeyt et al., at the University of Bristol, looked at this question in the house-hunting ant, Temnothorax albipennis. These ants live in precarious places such as under tree bark or in rock crevices, and their homes are frequently destroyed, and they thus need to rebuild at a new site.

When it's time to move, scouts go out from the nest, or hive in the case of bees, to find new sites. Behavior was always focused on the colony previously, not the individual, so it has always been thought that every scout had an equal chance of happening upon the best new site, and thus being followed by the rest of the group. But Stroeymeyt et al. have discovered that Temnothorax ants seem to acknowledge that some scouts are more experienced than others, and thus should be allowed to make the decision as to where to move.

Temnothorax ants investigate their environment preemptively, collecting information about potential nest sites before they must move, which apparently increases their chances of choosing a good site (though it does sound as though none of their choices are all that good, or they wouldn't have to be moving all the time!).

Information about suitable sites is initially gathered by the workers that discover and explore the sites. It could then potentially be stored in two, non-exclusive forms: in a common repository of information, such as pheromones (social information) marking the nest itself or leading to it, or in the memories of informed workers and/or individual-specific chemical marking (private information), frequently used by Temnothorax ants. In the latter case, informed individuals accessing this information could play a key role during later emigrations.To look at the role individuals play in this decision, Stroeymeyt et al. painted tiny uniquely colored dots on the backs of all the workers in colonies they brought into the lab. The idea was to determine "the role of specific individuals and the relative importance of private versus social information".

They brought 30 colonies into their lab, and moved them into nests consisting of 5 interconnected Petri dishes. They were given a week to explore the area. Their nest was then destroyed, inducing them to relocate. They had the choice of a nest just like the one that had been destroyed, that some of their number had previously investigated, and an identical one none of them had ever seen before. A new nest was considered chosen if all brood items were moved to that site, but not if items were split between two nests.

Exploration throughout the week was captured on webcams, so that it was known which individuals explored, and thus collected 'private' information about new nest sites. These were considered 'informed' workers. More informed workers were those that visited the site most, naive workers never visited it.

They also investigated "the relative importance of navigational memory and individual-specific chemical trails in the early discoveries of the familiar nest by informed workers". They did this by laying sheets of acetate between two nests, then rotating them, thus changing the location of previously laid chemical trails. The idea was to test the relative importance of chemical versus visual orientation clues.

As it turned out, familiar nests were discovered first, and seemed to be preferred over unfamiliar nests. Naive workers were as likely to find the unfamiliar nest as experienced workers, and took an equal amount of time to find either. Informed workers, however, were much more likely to find the familiar nest quickly. So, the researchers conclude that information collected by individual ants -- private information -- is important in leading them to new sites.

And they discovered that the ants do seem to follow chemical clues, since colonies emigrated significantly faster when the acetate under them, containing their chemical trail, was not moved, although they still preferred the familiar nest site over the unfamiliar, indicating that they were in fact following ants with private personal knowledge, which seems to include visual cues. And, the existence of private knowledge seems to be recognized by other ants in the colony, which speeds emigration to the site preferred by the experienced workers.

So, yes, the choice of a new site seems to be a collective decision, but some individuals have more influence over that decision than do others. We've blogged before about ants, and whether they think (we concluded that they do). We noted then what Darwin said in Descent of Man (1871) about ants, and it's worth repeating:

...the wonderfully diversified instincts, mental powers, and affections of ants are notorious, yet their cerebral ganglia are not so large as the quarter of a pin's head. Under this view, the brain of an ant is one of the most marvelous atoms of matter in the world, perhaps more so than the brain of man.The general idea about cognition and consciousness (often implicitly equated) is that it's a phenomenon of large-scale interactions among synapsed neurons: you need zillions of these to have 'thoughts' or make cognitive, evaluative judgments that go beyond mecahnical responses to, say, odorant detection. But if the pinheads of ants can do this, perhaps our ideas about what makes this possible has been too closely associated with our own high encephalization (big heads relative to body size) and egotism. Maybe quality rather than quantity in neuronal function is more important than we've thought, or in ways we yet haven't thought of.

Thursday, August 25, 2011

The food industry trumps public health -- again

In large part, as is turning out to be the theme of the week on MT, this has to do with industry pushing back. Yes, the impact of non-communicable disease on morbidity and mortality, not to mention national economies, are great (as Deborah Cohen says in the piece, "One estimate is that $84bn (£51bn; €59bn) of economic output will be lost between 2005 and 2015 as a result of non-communicable diseases"), but the effects on industry of curtailing the use of sugar, salt, processed foods and so forth are of more concern to powerful industry interests. And they are doing what they can to ensure this meeting fails.

As Cohen writes,

In the run up to the UN summit on non-communicable diseases, there are fears that industry interests might be trumping evidence based public health interventions. Will anything valuable be agreed?The hope had been that, at the very least, with the spotlight on non-communicable diseases, the meeting would make them more visible generally, turning this into a teachable moment in terms of disease prevention. But, pressure from the food industry is making it unlikely that governments are going to sign onto the commitment of resources for the health education campaigns that would be required to follow up.

At a UN meeting in New York for representatives of charities and the public (called “civil society” in global health) in June—staged to allow advocates to have their say on the final political declaration [prepared before the meeting convenes]—many of the tabled speakers came from groups either representing industry or funded by industry. Speakers included representatives of the International Federation of Pharmaceutical Manufacturers and Associations, the International Food and Beverage Alliance, and the World Federation of the Sporting Goods Industry. A list of those attending the September summit on behalf of civil society also includes representatives of industry, the BMJ has learnt. GlaxoSmithKline, Sanofi-Aventis, and the Global Alcohol Consumers Group are included within the official US delegation. And drinks companies Diageo and SABMiller are coming from the UK.And, there is conflict between the 'G77', the group of lower income states, who argue for the reduction of saturated fats in processed products, and the US, Canada, Australia, and EU, responding to pressure from the food industry. At best, industry is willing to accept voluntary cutbacks.

Bill Jeffery, from International Association of Consumer Food Organisations, says that the UN and WHO need to put up firewalls between their policy making processes and the alcohol and food companies “whose products stoke chronic diseases” and the drug and medical technology companies “whose fortunes rise with every diagnosed case.”

There are political issues as well. Money invested in NCDs is money diverted from infectious diseases and maternal and child health, and this is being resisted by many African countries, for whom NCDs are a less pressing issue, to date.“If national leaders embrace lame vendor-friendly voluntary ‘solutions’ instead of effective regulations governing advertising, product reformulation, package labelling, government procurement, and VAT reforms, public health and national economies will strain under the burden of NCDs for generations to come,” he said.

Now, whether or not it makes any material difference what the UN pronounces on this subject is a valid question. Goals put forward by such bodies are often little but words. But, the fact that industry seems to be pushing hard to prevent even words from being put forward suggests that they at least think UN action might be more than just a document at the end of the day.

It's not that any of these interests are necessarily invalid. The food industry has a right to profit from their products, and likewise the alcohol and tobacco industries. A lot of people make their living working in such industries, and countless people enjoy their products. But it is also true that food and alcohol consumption patterns lead to very large health-care bills, and we all one way or another have to foot that bill, so the industry is not the only entity with a legitimate interest in the discussions. What the relative costs and benefits are, and who should bear them, or how, are part of the equation, or should be. Fair apportionment could be based on item-specific taxes, behavior-based health care premiums, or whatever. But they should be based on considering the whole situation.

The results of a meeting on preventing and reducing non-communicable diseases should concern just that, and not be derailed (or pre-railed) by other, competing interests before the results are even presented. If governments ultimately decide that industry interest trumps public health, fine (well, in our view, not fine, but governments do have to weigh competing interests), but at least it should be clear that that's what happening. Instead, when, according to Cohen, "PepsiCo have secured the prime side-event slot at the UN meeting: a breakfast event from 8-10 am on the morning of the summit", and industry is helping mold the final product, public health interests don't t even get a clear shot.

Wednesday, August 24, 2011

Obesity is a public health problem, not a genetic one

Oddly enough, it seems that the length of time people are obese (BMI, or weight to height ratio over 30) -- that is, how long people are exposed to the risk factor -- has never previously been taken into account. Abdullah et al. looked at number of years lived with obesity, and its association with mortality from any cause, as well as selected causes, such as cardiovascular disease or cancer. The researchers used data from the Framingham Cohort Study, which has followed up a population of about 5000 individuals every 2 years for about 50 years. (Framingham is a project that will never die, but that's another story).

It turns out that for every additional 2 years of being obese, risk of mortality increases.

The adjusted hazard ratio (HR) for mortality increased as the number of years lived with obesity increased. For those who were obese for 1–4.9, 5–14.9, 15–24.9 and ≥25 years of the study follow-up period, adjusted HRs for all-cause mortality were 1.51 [95% confidence interval (CI) 1.27–1.79], 1.94 (95% CI 1.71–2.20), 2.25 (95% CI 1.89–2.67) and 2.52 (95% CI 2.08–3.06), respectively, compared with those who were never obese. A dose–response relation between years of duration of obesity was also clear for all-cause, cardiovascular, cancer and other-cause mortality. For every additional 2 years of obesity, the HRs for all-cause, cardiovascular disease, cancer and other-cause mortality were 1.06 (95% CI 1.05–1.07), 1.07 (95% CI 1.05–1.08), 1.03 (95% CI 1.01–1.05) and 1.07 (95% CI 1.05–1.11), respectively.The association remained significant even after adjusting for factors like smoking, age, current BMI, cardiovascular disease, cancer and diabetes. This seems to mean that there's an effect on length of life even beyond accounting for these other diseases. The authors propose a number of possible pathways through which obesity duration may have an effect on risk of mortality, including increased exposure to "endogenous production of reactive oxygen species and oxidative DNA damage, alterations in carcinogen-metabolizing enzymes, alteration in endogenous hormone metabolism and partial exhaustion of beta cells, with the resultant insulinopenia causing depressed glucose oxidation and impaired glucose tolerance."

Consequently, any association between duration of obesity and mortality might be expected to be partially explained by intermediate variables in the causal pathway to mortality such as blood pressure, serum cholesterol and serum glucose, with a longer duration of obesity potentially linked to an increased risk of chronic diseases such as diabetes, cardiovascular disease (CVD) and cancer.The authors conclude that, because onset of obesity is happening at earlier and earlier ages, obesity prevention strategies need to be stressed.

We would agree with this conclusion. Millions of dollars have been spent on the genetics of obesity. Hundreds of candidate genes have been suggested, and yet, obesity rates are still increasing. It is grossly clear that lifestyle makes the difference that matters by far the most, not genetic susceptibility (in earlier times, when obesity was much less common, many diseases related to obesity, and hence death due to them, were much less frequent). Obesity is a public health problem, not a genetic one. If we slimmed down -- or better yet, prevented obesity in the first place -- those who for genetic reasons simply can't, would have truly genetic problems that would then be much easier to understand at the gene level, and perhaps even to design gene-informed therapies and interventions.

And the resources spent on so much genetic research on obesity, largely an excuse for not tackling the real problem, could have had far more impact. The first studies to show clearly that obesity was a genetically complex trait may have been worth their cost. But we know that now. Now it's time to stop the epidemic, and genetics isn't going to do it.

Tuesday, August 23, 2011

Relying on the "experts"? An X-ample of the problem

When they don't, the arena for manipulating facts to serve vested interests is widened relative to what it should be. Then policy is in limbo, is hijacked by those interests, or becomes essentially uninformed--the exact opposite of what is needed and expected. The problem applies to scientists even within their own presumed areas of expertise.

Here's an example of this, from genetics, our own field. The current issue of the prestigious (expert-driven?) American Journal of Human Genetics has a picture of 16 US 25-cent coins on the cover, some heads up and others tails up. The picture is meant to illustrate a story about X-inactivation.

Here's an example of this, from genetics, our own field. The current issue of the prestigious (expert-driven?) American Journal of Human Genetics has a picture of 16 US 25-cent coins on the cover, some heads up and others tails up. The picture is meant to illustrate a story about X-inactivation. The dogma has been a phenomenon known as Lyonization, or X-chromosome inactivation: early in a female embryo, each cell randomly selects one of its two X chromosomes, and wraps it up tightly (so to speak) so that its genes cannot be activated. Every descendant cell in the later embryo and through the individual's life is assumed to 'remember' which of its X's to activate and which to keep inactivated, so that an adult female is a mosaic--some patches in her tissues are expressing the variants of the X that she inherited from her father, while the other patches express those inherited from the X donated by her mother.

This means each female cell has one active X, and the idea was that this keeps gene dosage from the X in her cells compatible with the rest of the genome, since males only have one X. Since X-inactivation is random, roughly half a female's cells have paternal, and half maternal X activated. Because X-inactivation occurs early and randomly, every female is different in terms of which patches of her cells are which.

Scientists needing melodrama rather than some dull picture of, say, actual chromosomes, the AJHG decided to illustrate probability with a coin. The imaged theory is 8 Heads, 8 Tails. But the cover story makes the point that evidence now shows that the two X's don't necessarily have an exactly 50-50 chance of being activated in a given cell. So, if genes on one of the X's give a growth advantage, more of the female's body may be found to be expressing that one. Similarly, if one X has damaging genes, fewer than half her cells will be found using that one.

This is interesting, and not surprising, even if it hadn't been documented as well in the past. But what is surprising is that it is (or should be) by now well-known that Lyonization does not really work like this! Indeed, various people including the lab of Dr Kateryna Makova here at Penn State, have shown that only part--around 85%--of the X is subject to inactivation. In female cells both X's remain active for the other 15% (raising questions about the dosage-balance view in regard to the genes in those regions). Indeed, there may be variation, even probabilistic variation from person to person or cell to cell, in which parts of the X remain active, and one possibility is that this has to do with various bits of transposable (move-around-able) DNA that may have been inserted there over the ages past (a subject beyond this post). Makova and colleagues have shown that the active regions seem to have been preserved by natural selection from varying as much as the regions subject to inactivation, presumably reflecting something about function, but beyond our point here.

This incomplete inactivation is entirely different from what the AJHG cover story is about. But it's a leading journal, and it is publishing what it basically portrays as a surprising deviation from classical theory....without even acknowledging that the theory has already had a major revision well-known and prominently published.

This may be a minor point in the scope of science (but perhaps not in understanding chromosome and sex evolution!), but it shows how subtle can be the intercalation of misunderstanding (if not dogma) in science. We're not talking here about journalists' mistakes (except to the extent that, in the huge arena of major journals, editorial staff looking for cool cover pictures are people with journalism degrees rather than scientists). The AJHG is one of the major journals in a highly sophisticated field, so one can imagine what the proliferating commercially driven hasty journals are like when it comes to reliability and accuracy.

We all are vulnerable to mistakes, so we're not particularly picking on this one, beyond making the point about the grip of dogma, the reliance on theory once learned but hard to relax, and the problem of anyone, even scientists, even in their own field, and teachers at all level, keeping up to date. It shows the extent to which even science is a cultural phenomenon, that involves lore as well as 'fact', but upon which opinions are framed and policy is made.

And speaking of imagery and dogma, by the way, why don't you try something: take a handful of quarters, and flip them vigorously a number of times. Do they actually come up half heads and half tails? In fact, if you do enough flips, you would find that they don't.

Monday, August 22, 2011

Are GM foods safe? Listen to disinterested scientists, not those with vested interests

Civilization depends on our expanding ability to produce food efficiently, which has markedly accelerated thanks to science and technology. The use of chemicals for fertilization and for pest and disease control, the induction of beneficial mutations in plants with chemicals or radiation to improve yields, and the mechanization of agriculture have all increased the amount of food that can be grown on each acre of land by as much as 10 times in the last 100 years.But, the author says, regulations are getting in the way of progress.

This illustrates some issues regarding the relationship between science and the society that invests in it. The issues are not simple, but they are very important. In this example, the question of whether genetically modified foods are safe is an important issue of our day, but from a policy point of view it needs to be evaluated dispassionately, by parties with no vested interest. And because there are huge vested interests at stake, it behooves the observers of the debate to know who is telling us what and why.

We'd be much more inclined to consider this op-ed piece on its face value if we didn't know the author, Nina Fedoroff, a Penn State professor of biology. She has been a highly placed science adviser to the government, and is a person of strong opinions who is not shy to state them (as the op-ed reflects).

Nina Fedoroff is an excellent scientist, with a long and distinguished record (that's why she was asked to be an adviser). She was a student of Barbara McClintock's and has a fine record of research in plant genetics. But, she has a history of serving on the boards of biotech and GM companies, and she is the author of a recent very pro-industry book on genetically modified foods, so it's totally fair, and highly precedented, to think of her as an advocate for industry rather than a more objective adviser. And advocacy does not necessarily make good science.

Fedoroff says, for example:

... genetically modified crops containing an extra gene that confers resistance to certain insects require much less pesticide. This is good for the environment because toxic pesticides decrease the supply of food for birds and run off the land to poison rivers, lakes and oceans.This is true as far as it goes. But, as we've written on MT before (e.g., here), the widespread use of insect-resistant plants, genetically modified plants that produce a Bacillus thuringiensis (Bt) toxin that is lethal to many plant pests is a real boon to many previously insignificant insects, who no longer have to compete with the major targets of the toxin for food or ground, and so thrive.

Farmers then find themselves spraying with insecticides once again, erasing the immediate benefits of Bt plants such as those Dr Fedoroff mentions, the decreased use of pesticide, less runoff into waters, etc. This unsurprising result was predicted by many evolutionary biologists, though Monsanto assured us it wouldn't happen. That it is happening is widespread knowledge, but this didn't make it into Dr Fedoroff's piece. Why not? If the evidence was misinterpreted or is not compelling, but is clearly 'out there', one would expect it to be acknowledged and dismissed. Among the industry's excuses, if it's fair to call them that, is that they'll keep a step ahead of the evolution of resistance to their modifications. But one can't just automatically take their word for it, given the history we already have.

In addition, the op-ed piece focuses simply on food supply, the need to produce more, when much of the problem feeding the world is political. Even if farmers produced only GM food, 12 million people would still be starving, or on the verge in Somalia, where militants with guns are preventing food from being distributed. The food is there, the people can't get it. The solution has to be much broader than high tech science, and arguably, resolving political and food policy issues has to be done before we make more food. Opponents of industrialized agriculture argue that more diversified, mixed and smaller scale farming would be better for soil, sustainable, and also able to feed everyone. And there are many other political and economic aspects of the way big agribusiness works relative to local farmers and their incomes, all well-known among the people who think about these things. There may be no simple answers, but the controversies are not simple and objections should not just be dismissed by omission.

How to feed the world is a real and long-standing question. But advocacy pieces by leading scientists who can be taken, or mistaken, as mouthpieces for industry are not the way to move the debate forward -- even if of course, advocacy pieces written by anti-GM activists can be equally slanted, and equally suspect. We cannot accuse Nina of being wrong about the facts, except in the sense of omission, and we can't even make a knowledgeable argument against (or for) various aspects of the issues. She may be entirely correct, or the objections may be valid but unimportant in the balance of things. The point is that science at the policy level should be less affected by vested interests and, especially, advisers should be dispassionate about the issues so that officials like politicians and bureaucrats, who haven't the expertise to make decisions without external advice, are given advice that is less polarized.

There is no shortage of polarized opinion, and there are plenty of lobbyists who have hardly-restricted access to politicians. It's their job to push a point of view. GM crops are only one example. Climate change, the need for trips to Mars, and even evolution itself are other such issues. Lobbying is a major way our society makes decisions. But even when, as in the case of evolution, one point of view is massively supported by the evidence, a policy adviser should be able to present the perspectives of various points of view rather than simply advocate. Even in the evolution example, politicians should know why some hold 'anti' views in the face of the evidence and why those views aren't supported by the evidence.

We're not stating any opinion about GM crops. The 'op' in op-ed gives authors the right to give opinions. But advocacy can be incompatible with good science, and while it may sell media it can often polarize rather than inform society.

Friday, August 19, 2011

The Age of Reason?

Each of the three guests on the program declared him or herself 'on the fence' with respect to the effect of globalization on religion. Modernity and 'scientism' were supposed to bring secularization, and spread by globalization, but this hasn't been the effect. Religion shows no signs of going away or even morphing very substantially. In fact, it seems to be thriving around the world. Evangelism, Islam, Pentecostal religions are on the march.

Yes, one of the guests said, it's on the march, and globalization leads to opportunities for communication and dialog, but it also leads to fundamentalist certainty, where different religions don't want dialog. This is a common phenomenon, perhaps the standard one, through the ages. Another of the guests pointed out that this isn't only true of religion, but could be a phenomenon of the globalized world in general. Everyone wants to be 'the answer'. It is also true of pure materialists and anti-religious views such as Marxism. It's human.

Said the same guest, the last 200 years have been hard on religion; the French revolution, American Revolution, the rise of nationalism, which has meant the fracturing of the Catholic Church, and its losing land, schools, influence, etc., whole religious systems attacked by fascism and communism, religious systems attacked by scientism, and imperialism. This, he said, has left religious communities with deep suspicion of any organization that says it's going to make the world better, since the last 200 years have seen organizations trying to wipe out religions in order to make the world better. Of course, it's hard to argue that religion has done the job, either.

Science has systematically eaten away at the empirical claims of sacred texts (like 6-day Genesis, Noah's Ark, Red Sea parting, Jonah surviving the whale's gut, 6000 year old earth). Literalist interpretations should by now have been thoroughly undermined by such a march of basically undoubtable materialist success. Any breach in any aspect of a sacred text--including acknowledging that the text could be metaphoric rather than literal--opens the gates of doubts of any other aspect of the Revealed Truth. If this part isn't true after all, then how do we know that other part is true? That is one of the reason why the literalists don't want to open the door to such options.

The persistence, and even expansion of fundamentalistic religion in the supposed Age of Reason is a puzzle in a way. (Among other things, it brings us science doubters, like Rick Perry, serious candidate for the presidency of the United States.) One might expect that the march of factual de-confirmation of received texts would, in a rationalist world, have also undermined the other ideas in the texts concerning principles of faith, morals, and even the basic belief that some sort of 'God' is out there--or caused at the very least a retreat to a more generic deism (God exists, and started it all, but doesn't intervene in personalized ways in our lives). A lot of what has occurred by the tilt back towards fundamentalism belies our belief that we're either rational or empirically driven.

But what if you think of it from a Darwinian perspective, in which the only thing that matters in any existential way is surviving to reproduce? From this perspective, it doesn't make any difference what we believe, as long as, as a society, we're identifiable, trustworthy, etc., behaving and conforming predictably and so on, attributes which many religious systems encourage in one way or another, but so does culture generally. 'Rational' just means having a reason for things. Fundamentalists certainly have such reasons, even if most scientists don't agree with their particular accepted truths. Indeed, in this sense, science becomes just another set of answers to the profound questions of life, another fundamentalist certainty, no more or less 'valid', in terms of making it through life, than any other set of answers.

That this is true is supported by the predictability of persons' responses to new events. When someone makes a pronouncement or a major event happens, people interpret it in light of their accepted social, political, religious, or theoretical beliefs. We are rarely swayed from such beliefs by new 'facts'--we either say we don't believe the new facts, we ignore them, or we re-interpret them. Even scientists have political and social, not to mention scientific-theoretical, commitments that lead them (us) to do that. Rationality does not mean seeing the world 'objectively' in the usual sense of that term.

There are fundamentalists in science, who adhere unquestioningly to dogma -- including those who refuse to allow the questioning of any aspects of evolution. Sometimes, in fact, they acknowledge that but say they do that to combat anti-evolution fundamentalists who will mis-use or mis-state any such questioning within science--a strange reason for a scientist to color or ignore facts! But, as we've written many times here on MT, there's no legitimate place for dogma in science, and that's where it should differ from religion. Unlike religion, science is or should be about questioning, challenging, pushing accepted wisdom.

But that clearly the age of systematic empiricism doesn't mean that other kinds of belief become unsustainable--either that no one can sustain such beliefs, or that beliefs contrary to facts can't sustain successful human lives.

Thursday, August 18, 2011

Science? Why bother!

So the noble, intellectually deep governor of Texas proclaims basically that he doesn't 'believe' in human-engendered global climate change. He believes that scientists have manipulated the data to make their case. 'Believe' is the appropriate word, and the fact that such a large state with at least some intelligent people in it persists in electing people like him to leading offices says a lot about the role of knowledge in human affairs.

Gov. Perry proclaims that there are 'increasing' numbers of people criticizing the evidence for anthropogenic global warming. This can only be viewed as a willful ignorance of the evidence, of the nature of science, all driven by vested political interests. A governor is a politician and as such isn't necessarily expected to actually know anything--s/he must rely on experts. But a governor should at least be driven by evidence, or should simply state that s/he doesn't care about actual evidence.

That some people criticize the data on climate change--and similarly, evolution, another subject that the benighted Eyes of Texas seem not willing to gaze upon--is clearly correct. Many if not most of the outspoken persons are media clunks or clear, demonstrable cranks. But those aside, there is no reason whatsoever why a scientist could not, should not, or must not challenge the evidence! Of course we, as a community of purportedly knowledgeable people, should.

Most every paper in science has limitations. Were that not true, we'd already know everything and could look for other jobs. Indeed, we on MT are among many who question various aspects of the current views, approaches, or data regarding evolution. If we thought there was doubt that evolution as currently viewed didn't actually happen, it wouldn't mean we were being cranks to say so and say why. It's our job to do that!

But even if the overwhelming avalanche of evidence supports the idea that evolution is a plain fact, there is no reason to do other than question the various details, mechanisms, and ideas about how it works. Such questioning is the only way science can advance beyond dogma.

The same is true of climate change. It is manifestly obvious that we're dumping greenhouse materials into the atmosphere, and that global climate is changing. It is much more difficult to determine how much of global climate change is part of natural cycles, which certainly exist, and how much human activity is affecting such cycles.

But we can take a more detached view and simply note that there is some non-trivial possibility that we humans are major players in such change, and that the change which we already know is occurring (for whatever reason) can cause great social instability (in the form of famines, wars over food or other resources, diseases, and so on). Given that possibility, whether or not 'increasing numbers of scientists' are questioning (some aspects of the data on) anthropogenic warming, it is prudent to try to do something to ameliorate it.

What cretin politicians are doing is to defend ignorance, or in essence to defend establish vested interests by denying that there's a problem requiring action.

Doing it right, or at least honestly

One possibility, that would be at least more honest, is for politicians to say "Hey, we like our life style, and we want to protect the wealth we get from the oil patch. Yes, maybe things will change in the 22nd century because of global warming, but we'll let our descendants deal with it. There'll be trauma and maybe big wars or shifts in power, wealth (and food), but so what? Humans in every generation have faced traumas of one kind or another. Remember WWII? 'Nam? AIDS? Our descendants will have theirs to deal with, as we've had ours."

The scientific evidence is irrelevant to such simple de facto, if selfishly short-term, views. But selfishly short-term views are, after all, legitimate views to hold. Denying evolution is another example. It is perfectly legitimate to say one doesn't care to recognize or act on what biologists say, and wishes instead to rely on prayer. Being in accordance with the facts is not a prerequisite for human culture.

A politician, fresh from his revival meeting that prayed for rain, could just be honest in that way, rather than intentionally or, more likely, willfully ignorantly, denying the evidence. Of course, given that his prayer meeting failed to generate even a few clouds, maybe we needn't pay any attention to what he says.

Now, if in the future Texas becomes as withered and sere as its governor's brain, will they still be so detached, or will they change their tune and expect, demand, and provide moral reasons why the rest of the world should provide them with food?

Wednesday, August 17, 2011

New findings in cancer research: Something old and something new

|

| Melanoma cells invading the brain |

Microbes comprise maybe 90% of the cells in our bodies, being integral to digestion, and other functions. They need us and we need them, and this co-existence is maintained by communication between our cells and our resident microbes. When this goes awry, our cells may be signaled to begin to divide; this aberrant signaling may be responsible for cancers of the digestive system -- the colon, stomach, and esophagus -- and perhaps other organs.

Another possibility is that segments of the non-coding DNA that makes up maybe 98% of our DNA may send aberrant signals that lead to uncontrollable cell division. Pseudogenes, or remnants of gene duplication events long ago that on their own now have no function, or garbled function, may be one of the elements in non-coding DNA that incite uncontrollable cell division.

And finally, micro RNA may inhibit or alter the normal working of messenger RNA, disrupting normal cell signaling. It has become apparent in recent years that micro RNA has a function in the regulation of much normal gene expression, but it seems that it could also be involved in the kinds of abnormal cellular functions that lead to cancer. It's possible that pseudogenes interact with microRNAs to do this.

Well, these alternatives are clearly all possible, but unfortunately the story is the usual type of overkill and melodrama that we see every day in the media. Investigators making exaggerated claims of new discoveries, rather than simply reporting additional facts and mechanisms that are being found. Contrary to the sense of the story, nothing in these new findings challenges the basic current idea about cancer, even as they make it clear that the usual approaches for finding genes 'for' cancer, such as genomewide association studies (GWAS), are generally not going to work.

And indeed, there may be some mechanisms that cause cancer but do not alter DNA or create abnormal cells. Instead, such mechanisms may simply induce genetically normal cells to respond to their environment in ways that are mistaken (relative to the survival of the organism). These included some apparent context responses that should lead as a rule to many cells misbehaving in the same way -- polyclonal tumors, in which not all cells are derived from the same single misbehaving ancestral cell. That was an older idea about cancer that hasn't had much credence for several decades.

Cancer is clearly a nasty problem for an organism, and one that involves evolution on the scale of one's individual lifetime, with some similarity to long-term evolution of species. If cancer were to turn out to be polyclonal, it would make it more like infection, in which the body is occupied by descendants of many different infecting bacterial cells or viral particles. Since the descendant cells of each founding cell will then undergo their own evolutionary history, attacking the tumor would become more difficult -- the tumor cells wouldn't share the same characteristics to the extent that a single clone of cells do. The evolution will not be of a single tree of descent.

Viral cancers can be polyclonal if the virus independently transforms different cells in the individual, but this seems unusual. Also, if only a small number of changes are required, and the person inherits one or more of them, and has many cells at risk of somatic mutations to generate the rest, there may be several independent tumors in the same person. There are some examples of this.

But generally, the standard model of a mix of inherited variation that makes a cell less likely to respond normally, complemented by somatic mutations that finish the job of producing a single, badly programmed progenitor cell, seems basically safe. Even if, as seems to be the case, we're finding new aspects of the genome and its behavior that are involved in the production of such a cell.

Tuesday, August 16, 2011

The end of ideas?

[Ideas] could penetrate the general culture and make celebrities out of thinkers — notably Albert Einstein, but also Reinhold Niebuhr, Daniel Bell, Betty Friedan, Carl Sagan and Stephen Jay Gould, to name a few. The ideas themselves could even be made famous: for instance, for “the end of ideology,” “the medium is the message,” “the feminine mystique,” “the Big Bang theory,” “the end of history.” A big idea could capture the cover of Time — “Is God Dead?” — and intellectuals like Norman Mailer, William F. Buckley Jr. and Gore Vidal would even occasionally be invited to the couches of late-night talk shows. How long ago that was.Indeed, The Origin of Species was a best seller when it was first published.

Regardless of some of the rather pedestrian instances he cites, Gabler says that we're not only living in a 'post-Enlightment age', in which science and rationality have given way to orthodoxy, faith, opinion and superstition, we're living in a 'post-idea age', in which people are not even thinking anymore.

In part, he says, this is because we're in the Information Age, where the glut of facts available to us means we don't have time to process it all. We prefer knowing things to thinking about them. Twitter, he says, makes the instantaneous exchange of inane information so easy that that's all we're exchanging.

To a large extent we agree, particularly if all you are tuned into is popular culture, where the loudest most obnoxious 'pundit' wins, celebrity gossip rules, and adherence to truth is tenuous at best. Tale-telling way outscores factuality.

What about at universities? Gabler says:

There is the retreat in universities from the real world, and an encouragement of and reward for the narrowest specialization rather than for daring — for tending potted plants rather than planting forests.This is certainly largely true, at a time in history when the smallest fundable unit is rewarded, in contrast with new and innovative big ideas. Unless they are patentable. This is in a sense a careerist, bourgeois takeover of one of the main places one might expect new ideas to come from. But even this isn't all that new: the really great ideas come from individuals with skill and luck to be in the right context. Many of them would never have made it in the institutional world, where we have to work for a living and hence have to play the game, not make too many waves, etc.

There are those, including Gabler's examples of big thinkers, such as Steven Pinker and Richard Dawkins, who have successfully crossed the line from the academy to popular culture, and have written best-sellers that engage the public, though Gabler wishes the likes of Pinker and Dawkins were more mainstream. But it's a rare thinker who can translate complex, nuanced ideas for the public. Not only are scientists still frowned upon for writing popular books, but it's damned difficult to write about science in an engaging way, and without dumbing down. Or without becoming an idealogue, and selling grand but rather empty or wholly contrived ideas not constrained by serious testing, which it could be argued both of these 'public intellectuals' have become. Most celebrity scientists are past their prime, and usually in a hungry-media society, way less conceptually deep or innovative than the image suggests. But, of course, the public love the things they sell, which are usually very well done for what they are.

Ideas are out there, if you stray away from the big media outlets. They aren't hidden -- you can find interesting, non-ideological thinking in some literary magazines these days, even some newspapers still, particularly outside the US, some radio (as you all know, we're partial to the BBC ourselves), some places on the web, including many blogs.

But, how much of it is science? The Enlightenment period which inaugurated modern science about 300 or 400 years ago, engendered the kind of empirical, systematic science that we have institutionalized by now. It can nibble away at the truth, and it can generate massive facts (and factoids). Industrialized as it is now, it can guarantee new, if incremental, knowledge and usually will also generate unexpected facts.

Such discoveries are rife in the life sciences, and we now know scads more about almost any area of biology you want to name. But that is not the same as great new conceptual understanding, of the Darwin or Einstein variety. They and other leaders in conceptual science commented about the stifling nature of universities even in their time. Rare indeed are such things, but at least in the past people were trying. Today, grand theorizing is a big seller, but truly transformative ideas are rare as hen's teeth. The same basic ideas in biology -- theory, if you will -- have been around for well over a century.

It may be that while we don't understand everything about evolution or genetics, we understand enough that our basic theory hasn't changed. Maybe it will, because maybe what we don't understand will force us to new insights of a profound nature. But the institutionalized, industrialized, bureaucratized nature of science does not give any hints as to what they might be, or when it may happen. There are all sorts of speculative wonderments being proposed, such as antimatter, Bosons, multiple universes, and all that. How much will play out, or be relevant to what we want to know about life, is impossible to tell. We have just published a commentary on this subject in regard to evolution and genetics ("Is life law-like?" in the journal Genetics), where we question the basic nature of the current 'theory' in biology, in the context of laws of nature....but we did not discover the next Great Idea (if there is to be one) about life.

It would be more interesting, for us at least, to be able to live in an era when such new, really fundamental insights were dropped into our awareness -- as in Darwin's, Einsteins, or Newton's time. The closest example that may give MT readers a taste of what it would be like, is probably the acceptance of continental drift and its implications for geology. Hopefully, something new like that is in the offing. But don't hold your breath (just get back to writing your next grant application)!

Monday, August 15, 2011

Grandeur and complexity: words and theory alike fail to do it justice

Thursday, August 11, 2011

Mendelian inheritance: conclusion

The reason is that these usages are inaccurate at best, and in today's world not needed. We have better computational tools than we did when these things began in the mid 1900s. Instead, for example, of the expected 1/2 for segregation proportions (for traits, not alleles) we can develop estimates of the actual probability of the trait in an offspring of an affected parent. It would be something like the probability of inheritance of the major parental allele, times the probability of a given effect size (trait measure, for example) plus or times something about environmental effects where known plus a similar term for the other parent.

Mendel was accused of cheating because his results were too close to his expectations to be due just to chance (there's more to the story since in his case the expectations he was too close to were wrong! see my article in 2002 Evolutionary Anthropology "Goings on in Mendel's garden"). But that is just what genetic risk estimators are doing! They are using 1/2 for the expected risk of the trait, rather than whatever the empirical evidence, properly studied, shows the risk is.

And similarly, by digesting the message, no longer confounding inheritance of traits with inheritance of genetic elements, we can go beyond rigid statistical 'significance' in GWAS, which is of the same kind as false expectations, and consider all the bits of information that we have.

These are seriously erroneous concepts that misdirect science on a large scale. They waste funds and lead to false expectations. It is one thing to pursue ideas that seem, at the time, to be correct even if they eventually prove to be inaccurate--indeed, all ideas are probably of that sort. But it is quite another to pursue ideas that one knows to be wrong, because that's a way to build a career or you are in a hurry and can't think of better ideas.

Many scientists accept, absorb, and repeat oversimplified dogma. They teach 'the scientific method', 'survival of the fittest', classical Mendelism, and the 'modern synthesis'. They repeat that "nothing in biology makes sense except in light of evolution" (even though most have only a caricature idea of evolution, or what most life scientists actually do on a daily basis).

Maybe they are aware of the inaccuracies, or maybe when teaching they scorn their students' abilities to know the difference. We think this is not good for science. But there is something else, that you might think is even worse. That is that many if not the vast majority of scientists basically don't bother to think about these things and, like Rhett Butler, simply don't give a damn.

They (we) do our work regardless. We push ahead to prove our favorite point of view, claiming to follow some principles when it suits us, ignoring them when it doesn't. It is strictly pragmatic. There is a lot of dissembling done on a daily basis. Careers have to be made, grants to be garnered, magazines to publish.

All of this is poor practice, but understandable. Nature is complicated, scientists fallible, experiments and technology often imprecise, and so on. We stumble, bumble, and bluff our way through. Things don't go as smoothly as perhaps they might if we were more rigorous relative to theory. Grants pay for work that they shouldn't. But, being fallible and having to make a living, we plow ahead and in the end, here and there, there is progress.

In retrospect, the heroes are remembered and recognized by historians and the media. Laws of Nature are named after them. Statues erected, biographies written. The chaff and blind alleys, and most of us scientists, are forgotten except by historians. Philosophers revise their philosophy of science, and science revises its theories. Life goes on.

That may be the blunt reality, and like other Utopian ideals, our theories of how we act fall short. In the daily, perhaps rather smug and dismissive, hurly-burly of science, the theoretical fineries are ignored if not actually sneered at.

But shouldn't we do better? Shouldn't we strive to train students better and to have a better idea of the nature of Nature, so we can be more efficient or effective? If we are so willing to denigrate alchemy, phrenology, humoral medicine and phlebotomy, as wrong and wasteful....and to criticize the illusions of religious dogma, should we accept dogmatic ignorance in today's science? We think not.

Mendelism is we think a good example of something held too rigidly too long past its sell date. It's taken by rote as a basic core fact of biology, in ways that impede progress and wastes large amounts of funds that service the professions but not the population paying the bill. We can do better.

Wednesday, August 10, 2011

Mendelian inheritance and evolution. Part III

First, because genetic units, not traits are what is inherited. A fertilized egg doesn't have legs or a brain nor pollenated pea seed have color, wrinkles, or plant-height. What is inherited are genetic variants that affect these traits in the adult organism.

Mendelism was wrong because most variation, the variation that enabled the modern synthesis to unite discrete Mendelian genetic inheritance with gradual Darwinian evolution, was generally minor relative to Mendel's purposes. Clear discrete states and dominance are not the general ground state of biology. In a population individual genes have many more than 2 alleles (variant sequences) and the effects of their paired combinations in individuals (plants and animals) is associated with more than two trait states (yellow or green). Even at single loci, even when some alleles are in the traditional sense dominant relative to others, the dominance is usually not complete or invariate.

Mendelism was wrong in that the key to uniting discrete genetic inheritance with gradual variation in traits and their evolution, was that many different genotypes--combinations of alleles--yield the same trait. When many genes co-contribute, as is widely the case, the trait can be called complex or 'polygenic'. This is because the individual effects are generally small relative to the variation in the trait. Small basically 'additive' effects predominate, rather than strongly determinative ones. We had to give up on notions of Mendelian inheritance were then (and still widely are today) widespread. But that this was the key to the modern synthesis was not widely perceived in these terms.

One could object that oh, yes what we say applies to multigenic traits, but not to single-gene traits. Aren't there hundreds of these on the books, in human disease and in other species as well? We'd respond that in real life most clear dominance or single-locus traits are much less dichotomous or simple that in general perception or in the textbooks, and adaptation and complex function are demonstrably cooperative and multigenic rather than singe-factor competitive. Complex organisms couldn't really function or evolve if they were just a bag of individual dominant, Mendelianly inherited traits. And not only are most traits multi-genic, but genes, no matter how our definitions what a gene is change, are multi-allelic in natural populations.

Once we realize this, we have to accept that in general, statistical dominance, which we explained in an early post in these series, is a population rather than biologically inherent property of individuals. It is the exception, and only a partial exception that is classically Mendelian: partial, because often even in these cases we overlook variation because it may not be great relative to our pragmatic purposes (such as diagnosing the presence of a disease). But pragmatic considerations like this often turn out to be wrong and science is about understanding nature.

The problems mainly follow from falsely confounding inheritance of genes and with inheritance of traits, and from using the extreme of a distribution--the few nearly-dominant examples--to characterize the whole distribution of the effects of individual genetic variants.

At the time of the synthesis, not enough was known about genes (or, at least, seriously and widely enough accepted) to recognize the implications of polygenic inheritance. But now there is no excuse for clinging to theoretical concepts that are misleading and, at best, inaccurate ways to understand life. Doing so has led us down the paths that we imagine will promise simple answers of immediate clinical or commercial value. Each approximate 'hit' entices us to go deeper into the woods of our dreams.

Tuesday, August 9, 2011

Mendelian inheritance and evolution. Part II

A lot of things contributed to this reconciliation. Many experiments, largely stimulated by the 1900 rediscovery of Mendel's 1866 work, began to find mutations that were not grotesque, but had small effects, and were perfectly viable for the organism. Big bad mutations did still arise and had been obvious and easy to see, but now the majority of mutations seemed to be of the lesser type.

Quantitative traits like stature varied gradually rather than by discrete jumps (such as between yellow and green peas). Darwin's distant cousin Francis Galton had shown formally what had been obvious, that even for quantitative traits relatives resembled each other--indeed, they did so more than they might for traits with strong dominance (yellow peas did not resemble their green-pea ancestors in that trait). A variety of investigators, most notably the statistical geneticist RA Fisher (in 1918) recognized how the two types of inheritance could be brought under the same umbrella. If complex or quantitative traits were caused by the effects of many different genes, each making a small contribution, then the resemblance among relatives, the quantitative variation, and Mendelian inheritance were all consistent.

If that was so, then Darwinian gradual evolution was possible even with Mendelian inheritance as the basic fact of life. That's what the modern synthesis showed. It seemed wholly legitimate, indeed perhaps strikingly insightful, and it became the core theory of biology (and in many eyes, we think somewhat erroneously in ways similar to the adherence to Mendelism as the ground-state of inheritance, it still is). The formal theory of evolution or, to many, of life was the mathematical theory called population genetics. Well, Isaac Newton said something to the effect that if it can't be expressed mathematically it can't be a law of Nature, so we needed something real, not soft for biology!

It is strange for a central theory in a serious science to say nothing whatever about actual traits of the entities--organisms--that it purports to explain, but that's what population genetics does. It says only that variation is comprised of discrete Mendelian (that is, discretely transmitted) units of inheritance ('genes' has been the word for them), and that by chance and mainly by the systematic force of natural selection, changes in the frequency of genetic variants were responsible for variation among organisms and among species. Surprisingly, this theory became accepted even before there was any real theory of what a 'gene' is! That means it was such a blanket view, so mathematically rigorous, that it could be universally applied to entities that hadn't even been discovered. Reconciling this theory with actual traits has only come in recent decades under rubrics like EvoDevo (the evolution of development). Our book, Mermaid's Tale, deals with these issues extensively, and we try to show that there has been far too much stress in biology that rested on the Competition-among-clearcut-units-is-almost-everything worldview of the modern synthesis.

The dogma became that Mendelism and Darwinism are compatible.

But in a real sense, we think, this is fundamentally wrong. The modern synthesis essentially was possible only because Mendelian inheritance was itself wrong!

Monday, August 8, 2011

Wicked smart apes

I tried to hate it. I really did.

But despite all the Hollywood violence; despite its (inadvertent but dangerous) glorification of the life of a pet chimp and of having one; despite the digital movements that weren’t always quite right… I still enjoyed Rise of the Planet of the Apes.

[If you’re worried about spoilers, (A) Don’t read the title of the movie, and (B) Don’t read any further until you’ve seen it. But to be honest, I'm not sure I reveal anything that wasn't already revealed in the trailers.]

I can't control how apologetic I feel for liking this flick so much. As a human I care deeply what other humans think of me and my movie tastes. And in a weird way I care what chimps, bonobos, gorillas and orangutans would think of me liking it. I guess I shouldn't apologize for being human and I can't easily stop being such a dork.

Perhaps it was the near-future sci-fi possibility of it. Perhaps it was all the sneaky little throwbacks to the original flick. Perhaps it was the attempt to tackle issues of personal bias, emotions, and capitalistic greed in the world of science. Perhaps it was the way James Franco wore that little white lab coat. Perhaps it was my adoration of apes overpowering the fact that these were mere digitized computer actor-humans. Perhaps it was the triumph of the apes! Perhaps it was impossible to go anywhere but up from my subterranean expectations. Perhaps I’m just a human and we humans love our big loud, manipulative blockbuster movies, especially ones that ask, “What does it mean to be human?”

The shows were all sold out on Sunday in West Warwick, so I’m betting most of my students will see this movie—if not this summer, then soon. And I’m sure to be fielding the questions they’re bound to have after watching it. You may have to field the same ones.

I may even use the movie as a teaching tool to help with topics like gene therapy, virus biology and therapeutic use, non-human disease models and test subjects, transgenic lab animals, and inheritance.

Although I’ve worked with custom-engineered virus vectors to modify and to shut down specified protein synthesis in epithelial cells, my experience stops there. And to help me try to make sense of this movie, I asked Ken and Anne to answer some questions that movie goers are bound to wonder.

Ken and Anne: Fire away.

Holly: In the movie Rise of the Planet of the Apes, a scientist invents a possible treatment for Alzheimer’s that regenerates neurons and they test it on chimpanzees in a fantastic lab (and the scientist also administers it to his father at home). The delivery system for the treatment is a virus vector injected into the bloodstream (for humans) or administered through a gas mask (for the lab chimps) that changes known genes associated with Alzheimer’s in humans (not chimps!). When a chimpanzee (who does not have Alzheimer's) is infected with the virus she becomes significantly more intelligent.

1. How does one test a cure for a human disease in non-affected non-humans?

Ken: We've not seen the movie but here are some guesses at your questions. In principle (far from practice at the moment), one could get such a vector into a person that could target the particular gene in cells, and replace it with a gene the vector carries. (If this were incorporated in the germ line of the mother or father, it would be passed on to their kids as part of their genome.) Testing simply would be taking a DNA sample (any cells--blood, cheek swab, etc) and looking for the sequence of the inserted gene. This is what is done to make transgenic mice (but the gene is inserted into an egg, not breathed in by an adult).

Anne: There are 2 things that normally would need to be tested in developing gene therapy; the system for delivering the genetic modification, and the efficacy of that modification. In principle they could/should be tested separately, so the delivery system would be tested on normal subjects before the efficacy of the cure is tested, so that, if it doesn't work, the researcher knows it's not because of the delivery system. Testing of many pharmaceutical products is done in similar stages -- first determine whether it's safe on normal people, then whether it actually cures. The first stage is often done on 'professional guinea pigs', people who make their living volunteering to test drug safety. But you're right, it's not the cure that's being tested on people without the disease, it's the efficacy or safety of the procedure.

2. The Alzheimer's (AD) cure not only heals neural degeneration (as evident in the human test case), but it improves cognition too and when both humans and normal chimps are infected their intelligence increases literally over night. Could that be possible? How?

Ken: It could (in principle) fix damaged neurons in the patient (this is at the moment largely fantasy but by now there may be some precedents--we're not up to date on what claims may be being made.) If the person's inherited genes that led to AD also led to poor cognition, and if changing the gene once their brain is developed could goose up the neurons' activities, then this, too, could occur in principle. Suppose for example that the problem were a neurotransmitter receptor that was somehow not very efficient, and this were replaced so that signals traveled between synapses more rapidly. Again this is all 'suppose' at present!

Anne: If intelligence is due to synapse speed, say, one could imagine that could be upgraded quickly. It's harder to imagine that the biochemistry underlying chimp intelligence is the same as that that causes dementia, and that therefore they'd have the same fix!

3. Also—and this is the real question I’m interested in discussing especially considering the recent Mendel-Wasn’t-Right theme here on the MT!—A female chimp who has been infected actually passes the positive genetic affects onto her offspring. They even remark how her son is intelligent because it's "in his genes." How could this be possible?

Ken & Anne: In the same way as related to #1 above, the offspring would inherit the faster-firing receptor gene and would be smarter.

All of this assumes that one gene change would work across genomic background variation, with no side effects, and all that. But the dream of real gene therapy has been to do what you're describing (again, we didn't see the movie). A good example would be replacing sickle cell hemoglobin (the beta globin gene) with a normal version, or replacing the mutant Tay Sachs or Cystic Fibrosis gene with normal sequences. But to be inherited it has to involve the germ line cells.

There are some known mechanisms that illustrate how such a dream scenario could be plausible. Cells have receptors that bring what binds to them into the cell (usually, this is for some normal cell response to the environment). A virus could be engineered to be taken into some specific cell, like a neuron, in this way. The virus could be designed so that genes it carries are made into RNA corresponding to the 'good' gene version, along with code for a protein like reverse transcriptase that turns RNA into DNA and inserts it into chromosomes could be used. The latter is how viruses currently incorporate into DNA and cause trouble; our genomes are littered with such inserted elements. The difference is that they insert only occasionally and even then into random places in the genome, or places of their choosing.

To get this into places of our choosing, we would have to engineer the system to recognize some sequence of the target gene area and insert the virus's passenger gene at that place, excising the current (bad) gene there.

In any cell in which this occurred, the transgene would have replaced the normal gene, and the job would be done for that cell. If in a sperm or egg precursor, then that would be transmitted to (half of) the person's offspring.